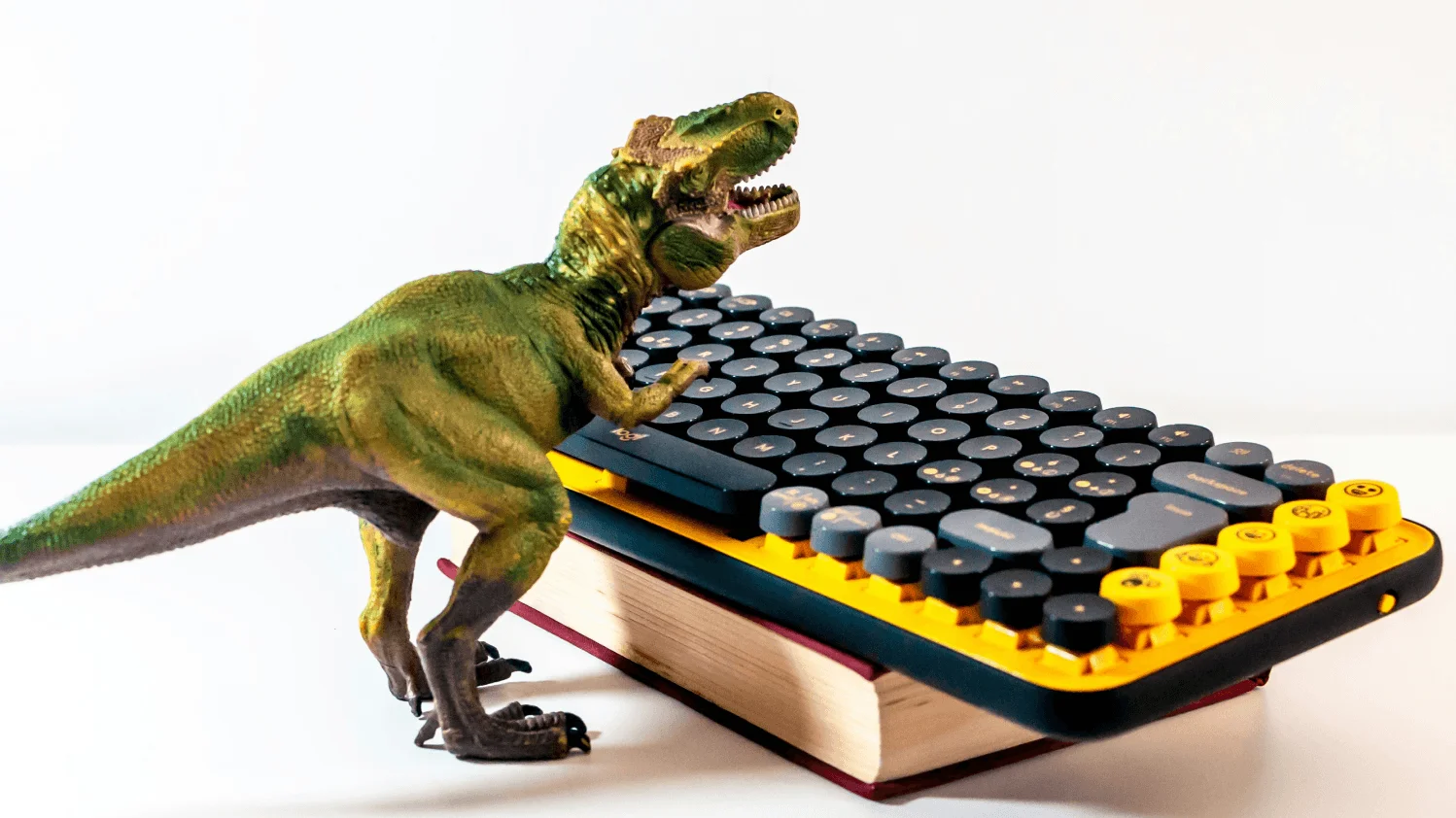

TL;DR: Big Data batch systems were the dinosaurs: massive, powerful, perfectly adapted to a world that no longer exists. Real-time requirements, AI workloads, and the expectation of instant insight are the meteor. The next generation of data systems won't be bigger batch clusters — they'll be adaptive systems (Context Lakes) that serve both analytical and operational workloads with perpetual queries that update as data streams in.

The Dinosaur Problem

Big Data systems were the dinosaurs of the data world: massive, powerful, perfectly adapted to their environment. Hadoop clusters processing petabytes overnight. Batch pipelines running like clockwork. Dashboards updating by morning.

These systems dominated because they solved real problems at unprecedented scale. But like dinosaurs, they were optimized for a world that no longer exists.

The meteor struck: real-time requirements, AI workloads, and the expectation of instant insight. The batch-oriented giants couldn't adapt fast enough.

Mammals vs. Dinosaurs

Mammals didn't outcompete dinosaurs by being bigger or stronger. They won by being adaptive: able to survive in diverse environments, respond to changing conditions, and thrive in niches the giants couldn't reach.

The same pattern applies to data systems. The next generation won't be larger batch clusters. It will be systems that adapt: real-time ingestion, instant queryability, and the flexibility to serve both analytical and operational workloads.

Context Lakes are the mammals of the data world. Not bigger, but faster to adapt. Not more powerful in any single dimension, but capable of thriving across the new landscape.

Perpetual Queries: A Technical Walkthrough

One capability that defines mammalian data systems is the perpetual query: a continuously running computation that updates as data streams in.

Consider a trading application that needs to maintain rolling statistics for every asset: moving averages, volatility measures, and correlation matrices. In a batch system, this requires periodic recalculation. In a streaming system, it can update in real-time.

The following SQL demonstrates a perpetual materialized view that maintains rolling 24-hour statistics, updating automatically as new trades arrive.

The Evolutionary Advantage

The advantage of perpetual queries isn't just speed. It's the elimination of the batch/real-time dichotomy. The same data model serves both historical analysis and real-time decision-making.

This unification simplifies architecture, reduces operational overhead, and ensures consistency between analytical and operational views. You're not maintaining two systems anymore—you're maintaining one that adapts.

Adapting to Survive

The data landscape is changing faster than most architectures can evolve. AI workloads demand freshness. Agents require consistency. Users expect instant responses.

Organizations that cling to batch-oriented giants will find themselves increasingly outcompeted by nimbler alternatives. The future belongs to systems—and teams—that can adapt.

Code like a mammal. Build for change. Embrace real-time. The meteor has already struck.

Frequently Asked Questions

Written by Boyd Stowe

Former Couchbase and IBM. Two decades helping enterprises adopt new database paradigms.

Ready to see Tacnode Context Lake in action?

Book a demo and discover how Tacnode can power your AI-native applications.

Book a Demo